From Operations to Leadership: What 19 Years in Cybersecurity Actually Taught Me

I’m Brian Olson, a cybersecurity professional with deep experience in DFIR and network forensics—currently working in Big Tech. My mission? To share hard-won lessons not just with fellow experts, but with anyone learning and growing in security—especially those in smaller companies without the resources or teams a massive enterprise has. I started blogging to break down real-world strategies, honest tool reviews, and battle-tested workflows in plain language. Whether you’re an underdog SOC analyst or a curious IT pro tackling security for the first time, my goal is to make the practical side of cybersecurity a little less overwhelming—and a lot more actionable. Expect guides that cut through the noise, resources that are actually useful at the keyboard, and stories that prove you don’t need a giant budget to make a real impact. You’ll also find my takes on personal finance, real estate, and anything that helps us level up—in and out of work. Let’s connect. We’re all in this together!

I've spent the better part of two decades in cybersecurity — starting in entry-level IT, working through the trenches of incident response and threat detection, and eventually moving into engineering management. Along the way I've worked across military, government, and private sector environments, led security operations during one of the largest data breaches in history, and taught network forensics for SANS.

This post isn't a resume walkthrough. It's an attempt to distill the things that actually mattered — the technical investments that compounded over time, and the people skills that turned out to be just as important as any exploit chain I ever analyzed. If you're early or mid-career in tech and wondering what to double down on, this is what I'd tell you over coffee.

A caveat before we go further: I'm one person who traveled one path. These are opinions shaped by experience, not universal truths. Your mileage will vary, and that's fine.

The Technical Fundamentals That Compound

Linux Is Still a Cheat Code

In an industry full of specialized tools and certifications, plain old Linux fluency remains one of the rarest and most valuable skills I see — and it shouldn't be. The vast majority of the world's web infrastructure runs on Linux. Most security tools were originally built on Unix-based systems. And yet, a shocking number of security professionals are uncomfortable at a Linux command line.

Early in my career, I worked a geographically distributed compromise where the entire investigation hinged on being able to move efficiently across Linux systems. The analysts who could SSH into boxes, parse logs on the fly, and string together commands with pipes and loops were the ones who drove the investigation forward. Everyone else was waiting for someone to export data into a GUI.

If you take one thing from this section: get comfortable living in a terminal. Learn awk, sed, grep, and how to pipe them together. Understand SSH — not just how to connect, but proxies, tunnels, and port forwarding. These are the same techniques adversaries use, and understanding them makes you better on both sides of the fight.

Learn to Code (Update: It's Complicated Now)

When I originally gave this talk, my advice was straightforward: learn Python or Go. I resisted coding for years — I was a bash wizard, and bash got me pretty far. But there's a ceiling to what shell scripts can do when you're operating at the scale of millions of hosts. The moment I started writing Python, I realized how much time I'd been wasting. Not because bash is bad, but because Python gave me modularity, libraries, and tools that other people could actually maintain.

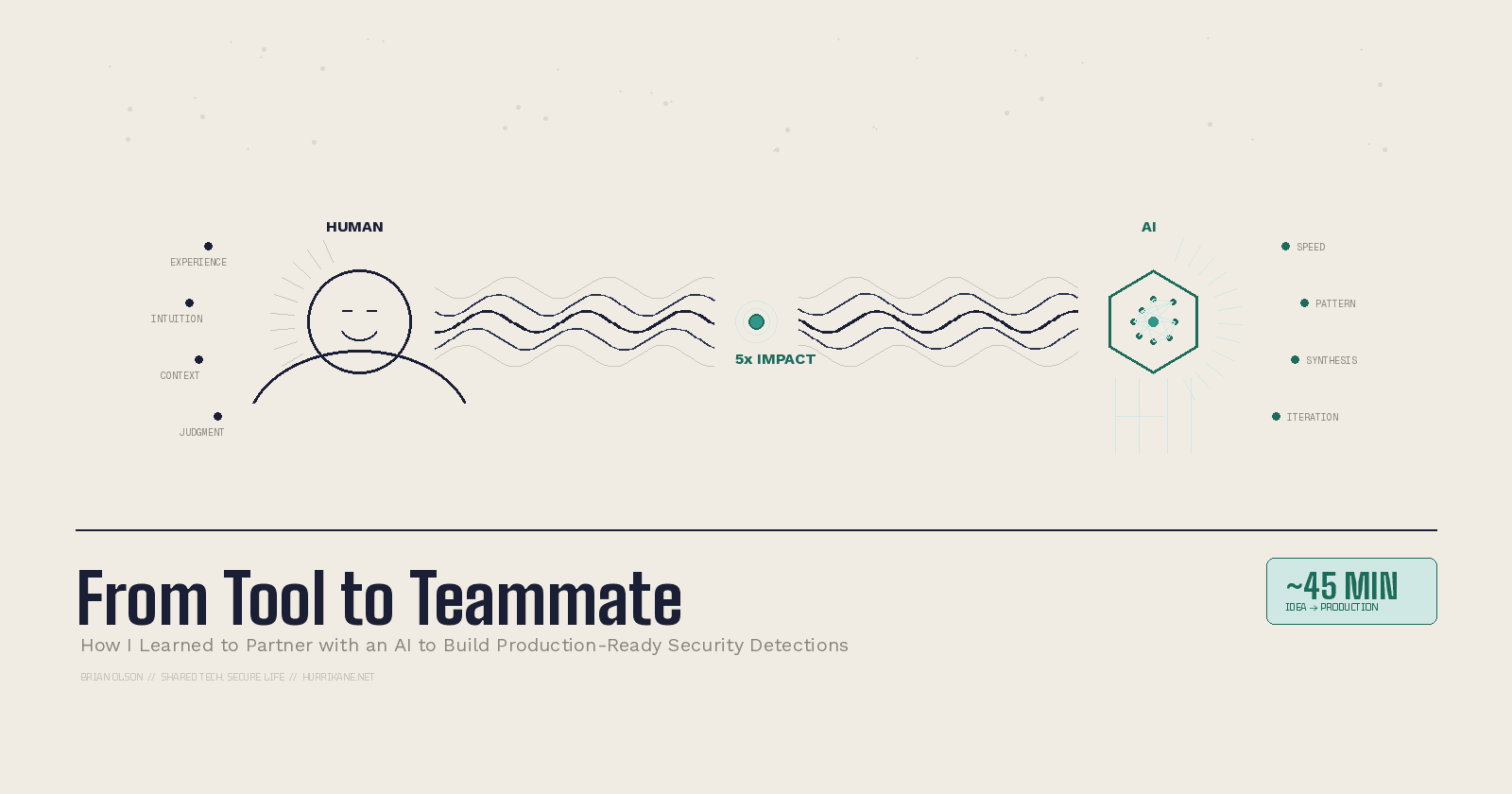

That advice isn't wrong, but it's incomplete in 2026. AI-assisted coding has changed the equation. You can generate functional Python with a well-crafted prompt, and for a lot of one-off security tooling, that's good enough. But "good enough" has a shelf life. AI can write the code — it can't tell you whether the code is doing the right thing, handling edge cases safely, or introducing a subtle vulnerability. You still need to read code critically, understand what it's doing, and know when the output is wrong.

So my updated take: the goal isn't necessarily grinding through Python tutorials from zero anymore. The goal is code literacy — understanding control flow, data structures, how libraries work, what good code looks like versus bad code. Whether you write it by hand or direct an AI to write it, you need to be the one who knows if it's correct. If you're starting from scratch, Ansible is still a decent on-ramp. It gets you thinking in terms of automation and repeatability without requiring you to become a software engineer overnight.

Security Engineering Is Where You Scale

There's a phase in most security careers where you realize that doing the work isn't enough — you need to build systems that do the work for you. That's the shift from operations to engineering.

Security engineering is about building and scaling security: automating the nonsense tasks so humans can focus on the work that actually requires judgment. Detection engineering, in particular, became the thing that let me multiply my impact far beyond what I could do as an individual analyst. It's matured a lot as a discipline since I started — there are dedicated roles, teams, and frameworks now that didn't exist a few years ago. If you're interested, Practical Threat Detection Engineering by Megan Roddie is a solid starting point.

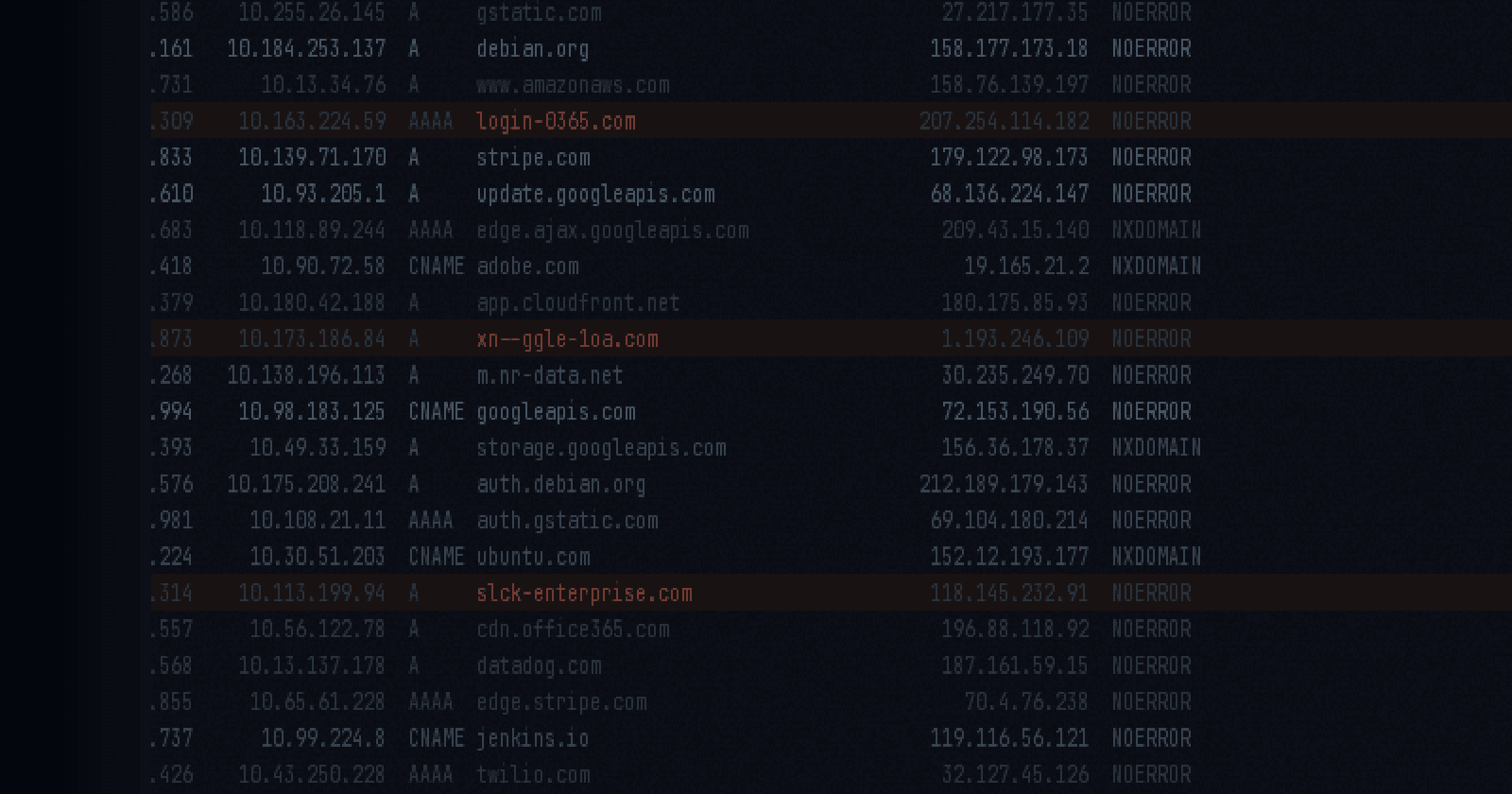

Log Analysis Is a Superpower

I mean this literally. The ability to aggregate, normalize, enrich, and search through terabytes of log data is one of the most powerful capabilities in security. SQL is underrated in this field. The Elastic Stack is still worth learning deeply, even as the SIEM landscape keeps shifting — the underlying concepts of indexing, querying, and correlating log data transfer everywhere. And if you're doing forensics, SOF-ELK remains a great way to get hands-on fast.

The analysts I've seen grow fastest are the ones who treat log analysis not as a chore but as an investigative tool — a way to ask questions of the data and follow the answers wherever they lead.

Think Like the Attacker

This is well-trodden advice, but it bears repeating because of how it helps. Understanding offensive security isn't about becoming a red teamer (though you can). It's about internalizing the kill chain so deeply that when you're on the defensive side, you can recognize where an intruder is in the process and know what artifacts they've left behind.

You don't need to be an expert. You need enough exposure to think in terms of attacker workflows, not just defender checklists.

The People Skills Nobody Warned Me About

I spent the first chunk of my career thinking that if I just got technically sharp enough, everything else would follow. It doesn't. At some point, the thing holding you back isn't what you know — it's how you work with other people.

Cybersecurity Is a Team Sport

No one knows everything. I've worked with brilliant analysts who could reverse-engineer malware in their sleep but couldn't communicate their findings to a non-technical stakeholder. I've seen investigations stall not because of technical limitations, but because team members didn't trust each other enough to delegate.

Some of the most intense professional experiences I've had were in war rooms — those high-pressure, all-hands-on-deck incident response scenarios where everything is on fire and the clock is running. The teams that performed best weren't the ones with the most talented individuals. They were the ones where people had built relationships before the crisis hit. Where they'd grabbed lunch together, knew each other's strengths, and had enough trust to hand off critical tasks without micromanaging.

This has only gotten harder with distributed teams. When half the war room is on Zoom and the other half is in a Slack thread, the relationships you didn't build in advance become painfully obvious. Build them now, before you need them.

Think Out Loud

This is a small habit that will save you more time than almost any tool you install. When you're working a problem, verbalize your thought process. Share your assumptions. Talk through your reasoning with whoever is nearby.

Two things happen when you do this. First, someone will challenge an assumption you didn't realize you were making, saving you hours of going down the wrong path. Second, you create a shared understanding across the team — everyone knows where you are, what you're thinking, and how they can help.

I've watched analysts sit silently at their desk for four hours, stuck on a problem, because they didn't want to look like they didn't know the answer. Meanwhile, the person sitting ten feet away had the exact piece of context they needed.

In a distributed world, "thinking out loud" looks different — it's a running thread in Slack, a working doc with your notes, a quick voice message to a teammate. The medium changed; the principle didn't. Make your thought process visible. Ask questions. It's not a sign of weakness — it's how good teams operate.

Understand What Motivates People

I was slow to learn this one. When you're deep in security, it's easy to see the world in terms of risk and compliance — things are either secure or they're not. But the people you work with have their own priorities, pressures, and incentives, and those don't always align with yours.

I once worked with a kernel developer who pushed back hard on a security recommendation. My instinct was frustration — why wouldn't they just do the obviously correct thing? But when I took the time to sit down with them, understand their constraints, and figure out what they were optimizing for, we found a compromise that addressed the security concern without blowing up their roadmap.

Sometimes the best security work you can do is grab coffee with someone and ask them what's keeping them up at night.

Validate Your Assumptions (Relentlessly)

Keep a running list of assumptions. Check them. Then check them again.

I learned this lesson in a way I'll never forget. During an investigation, everyone on the team accepted as fact that compromised systems had wiped themselves — which meant we were working with limited evidence. I pushed back on that assumption, dug into the specifics, and discovered it wasn't true. That single act of questioning what everyone 'knew' led to recovering ten times more evidence than we thought existed — and identifying ten times more victims. Those aren't just numbers. Those are people whose compromised data would have gone unnoticed if we'd kept operating on a bad assumption.

Assumptions are necessary — you can't operate without them. But unvalidated assumptions are the single biggest source of error I've seen in security operations. Write them down. Revisit them. Don't let them calcify into facts.

Winning Means Compromise

I fought this one for a while. Security is important, but it's rarely the primary purpose of the organization. The business has goals, and your job is to help them achieve those goals securely — not to block everything that carries risk. That's literally everything.

The best security outcomes I've been part of involved finding an amicable middle ground. Sometimes that means accepting compensating controls instead of your ideal solution. Sometimes it means giving more ground than you're comfortable with. But a compromise that gets implemented beats a perfect solution that gets vetoed every single time.

Stalemate is the worst outcome. Nothing changes, nobody improves, and you've spent political capital for zero return.

Balance Optimism and Pessimism

Security people tend to skew pessimistic — we're trained to see what's broken. But unchecked pessimism makes you the person who says "no" to everything, and eventually people stop asking you altogether.

On the flip side, unchecked optimism is just as dangerous. Overpromising and underdelivering leads to burnout, missed deadlines, and people who stop believing what you tell them.

The sweet spot is informed realism: honest about the risks, practical about the solutions, and generous in assuming that the people around you are acting with good intent until proven otherwise. Start every interaction assuming the best about the other person. You'll be right more often than you think.

Build a Culture of Learning (and Blamelessness)

This industry moves too fast for anyone to know it all. The moment you accept that — really accept it — you stop pretending and start learning.

The best teams I've been on had a culture where it was safe to say "I don't know." Where mistakes were treated as learning opportunities, not career-ending events. When something goes wrong, extract the lessons, share them widely, and move on. Never blame. Never fault someone for a mistake made in good faith.

Fostering curiosity is simpler than people think. Just keep asking "why." Why did this alert fire? Why did we build the process this way? Why do we assume that's true? Create space for those questions and the answers tend to follow.

The Shift: From Doing to Multiplying

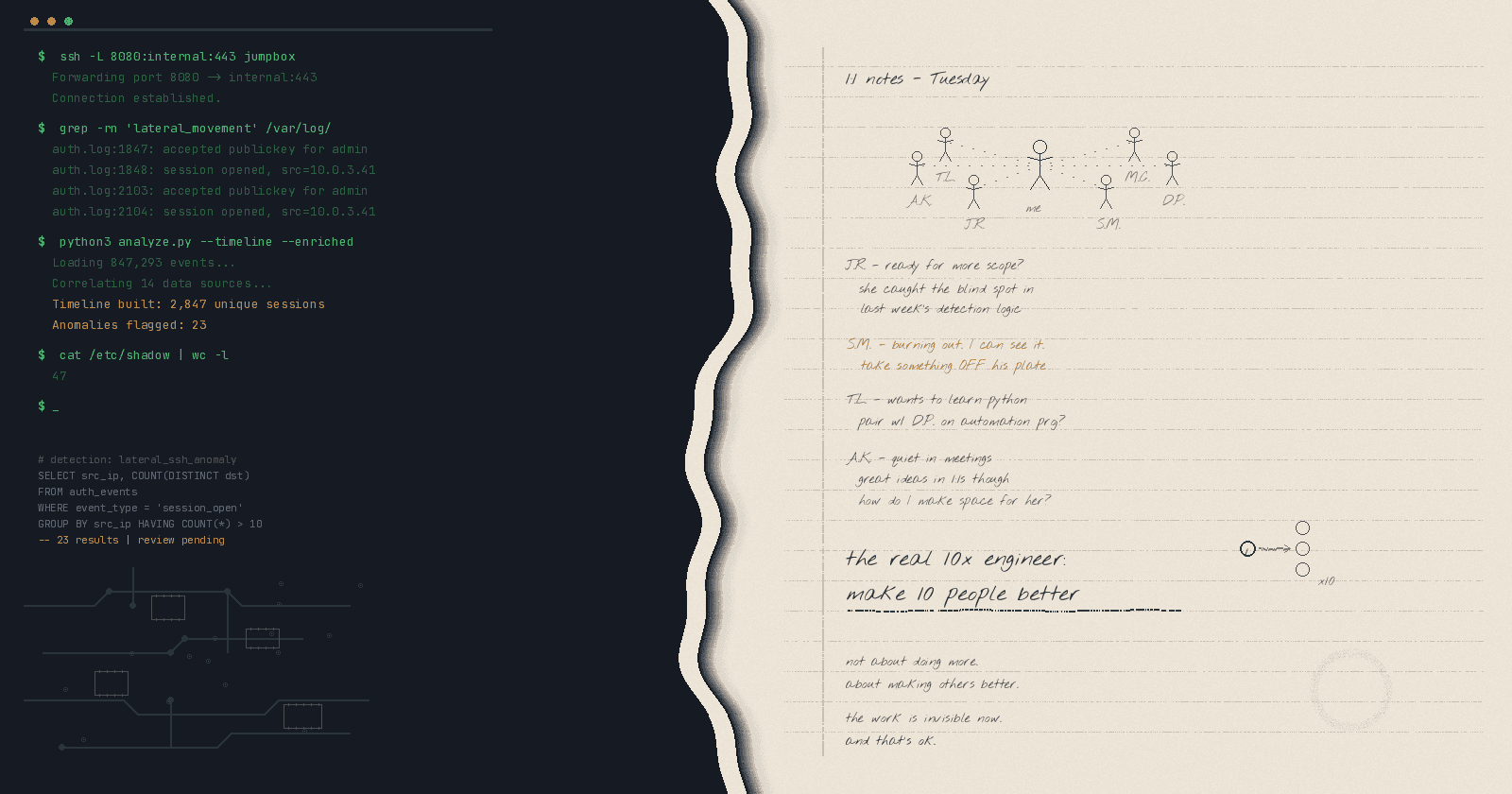

The biggest shift in my career wasn't a promotion or a job change. It was the moment I realized that stepping back from "doing" and stepping into mentoring would let me multiply my impact in a way that individual contribution never could.

As an individual contributor, your impact is bounded by your own time and energy. As a leader — formal or informal — you can help ten people avoid the burnout you experienced, develop skills faster than you did, and solve problems you never would have gotten to on your own. That's the real 10x engineer: not someone who writes ten times more code, but someone who makes ten people around them better.

It's not an easy transition. You have to let go of being the person with the answers and become the person who asks the right questions. You have to trust your team to execute, even when you could do it faster yourself. And you have to accept that your most important work is now invisible — the crisis that never happened because you helped someone grow, the burnout that didn't claim a talented analyst because you caught the signs early.

A Few More Things (From the Appendix of My Brain)

Own your career. Your manager isn't going to remember everything you accomplished. When evaluation cycles come around, advocate for yourself. It's not bragging — it's accurate reporting.

Go deep and wide. The generalist vs. specialist debate never made much sense to me. Get broad exposure early, then go deep where your curiosity pulls you. You can always broaden again later.

Don't just fix it — understand it. The quick fix gets the ticket closed. Understanding why it broke in the first place prevents the next ten tickets.

Take the challenges nobody wants. The ugliest, most ambiguous, most thankless problems are where the most valuable experience lives. Most people take the easy wins. Push yourself toward the hard ones.

Perfection is impossible. We learn textbook-perfect security and then immediately discover that everything in the real world is broken. The skill isn't achieving perfection — it's making good calls in the gap between how things should work and how they actually do.

This post is adapted from a talk I gave at the Augusta ISSA chapter & SANS APAC DFIR Summit — you can watch the SANS recording on YouTube. If you want to go deeper on the network forensics and threat hunting side, I'm teaching FOR572 at SANS Security West in San Diego this May. And if you just want to talk about career growth, security engineering, or anything in between, find me on LinkedIn or reach out at brian@hurrikane.net.