From Tool to Teammate: How I Learned to Partner with an AI to Build Production-Ready Security Detections

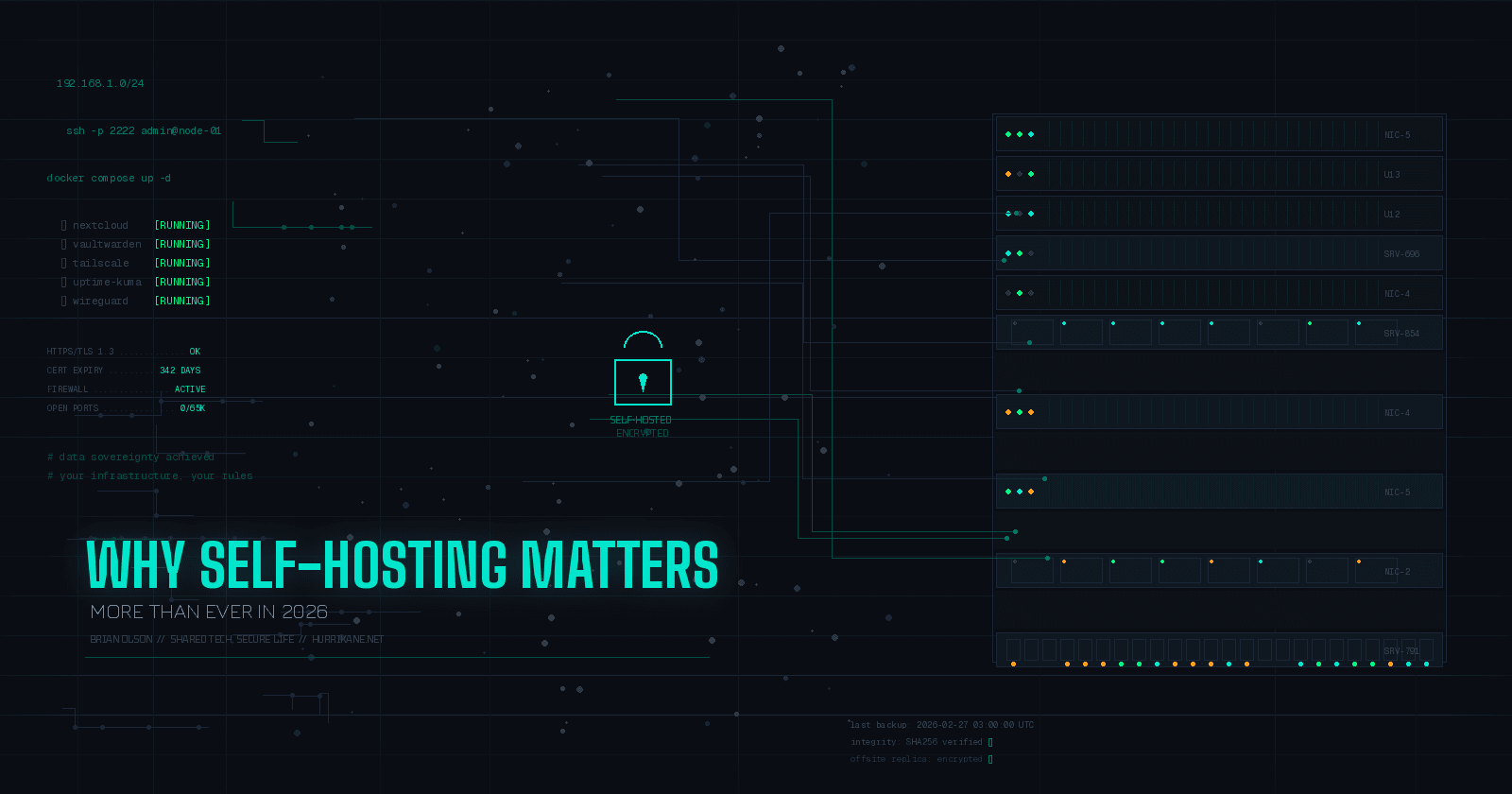

I’m Brian Olson, a cybersecurity professional with deep experience in DFIR and network forensics—currently working in Big Tech. My mission? To share hard-won lessons not just with fellow experts, but with anyone learning and growing in security—especially those in smaller companies without the resources or teams a massive enterprise has. I started blogging to break down real-world strategies, honest tool reviews, and battle-tested workflows in plain language. Whether you’re an underdog SOC analyst or a curious IT pro tackling security for the first time, my goal is to make the practical side of cybersecurity a little less overwhelming—and a lot more actionable. Expect guides that cut through the noise, resources that are actually useful at the keyboard, and stories that prove you don’t need a giant budget to make a real impact. You’ll also find my takes on personal finance, real estate, and anything that helps us level up—in and out of work. Let’s connect. We’re all in this together!

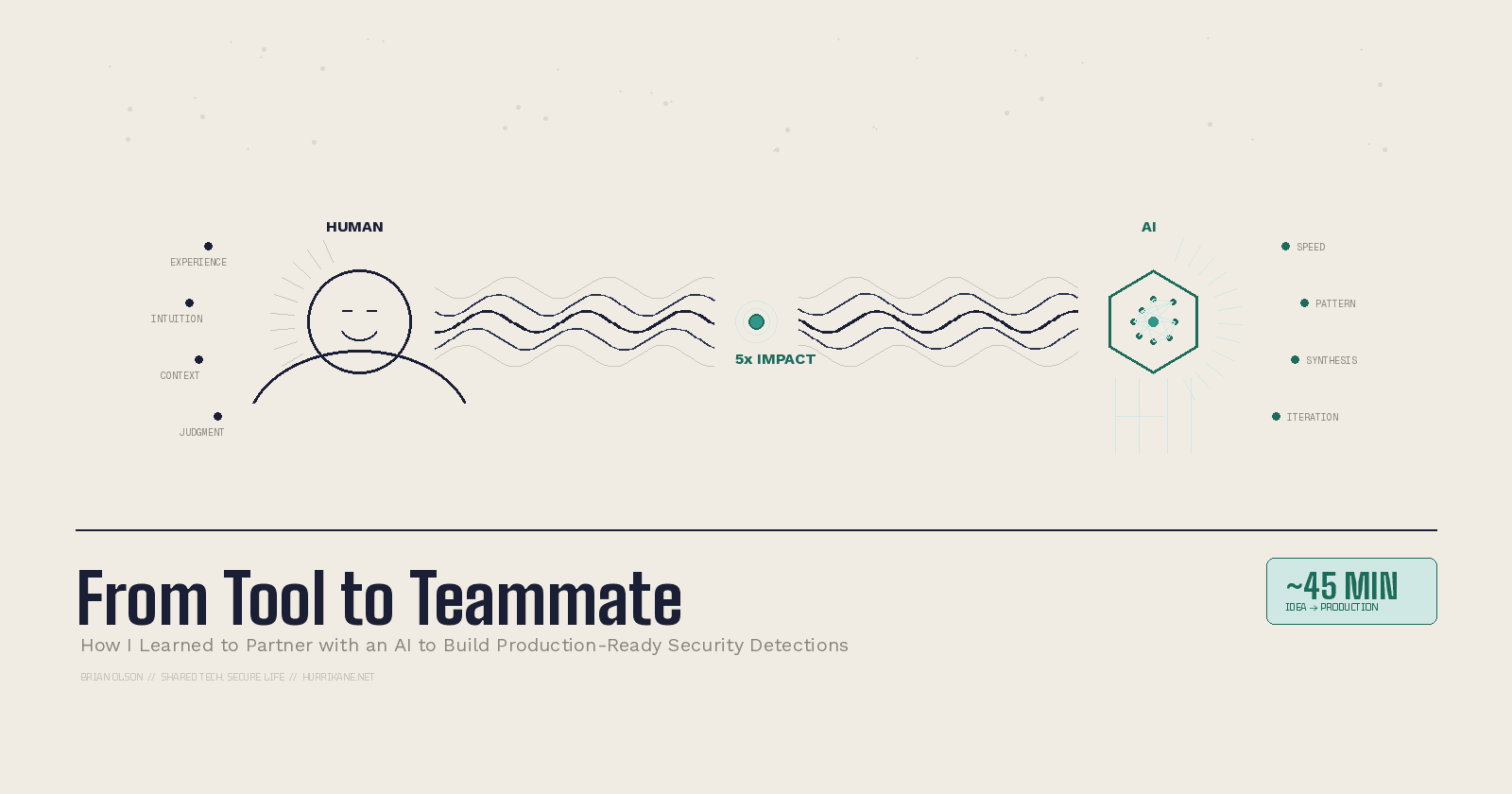

I'm going to tell you a story about how I compressed a multi-week detection engineering project into a single 45-minute session. And then I'm going to tell you exactly how I did it—because I think this capability is accessible to every engineer reading this right now.

But first, some context on how I got here.

My AI Journey (So Far)

My relationship with AI has gone through distinct phases, and if you're a security engineer or developer, you've probably experienced something similar.

It started with search. I replaced Google for research tasks and turned 30-minute deep dives into sub-one-minute summaries. Useful, but not transformative.

Then came the code phase—copy-pasting generated snippets into my projects. Effective in spurts, but slow and inefficient. The AI would lose context, and I'd spend as much time fixing its output as I saved generating it.

Next was Agentic v1 with tools like Aider. I could see the potential—an AI that could actually read and modify files in my codebase—but the tool wasn't ready. It produced bad code and couldn't maintain focus across complex tasks. Not putting it all on Aider, it was likely also where I was in my AI learning curve.

Then Claude happened. Agentic v2 was the game changer. The moment I could truly see how agentic AI would transform how we work. Not someday—now.

And on the horizon? Agentic v3: agents orchestrating agents. Specialized AI collaborators tackling complex, multi-domain problems together. But that's a topic for another post.

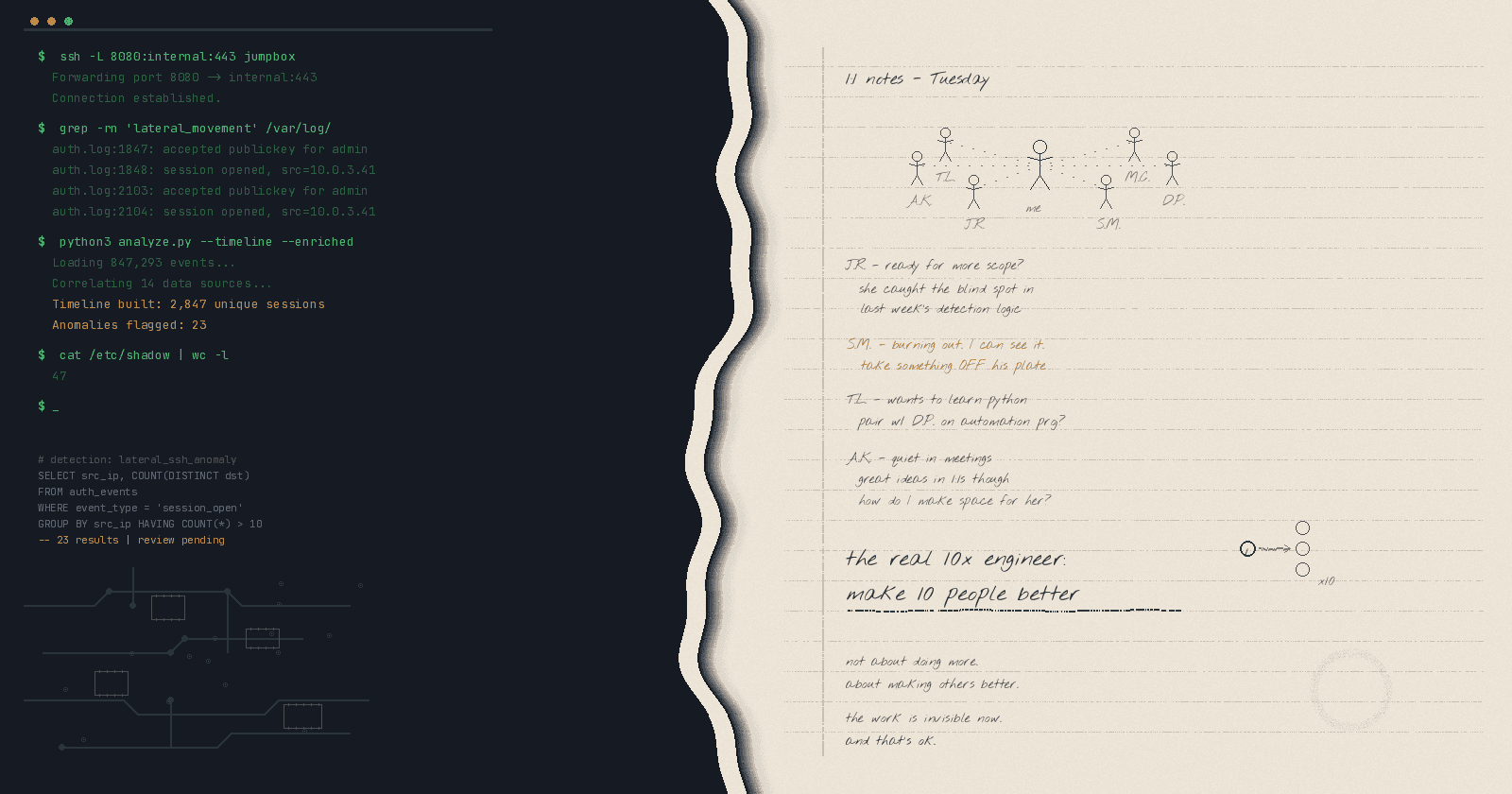

The key insight across this journey: this isn't about replacing our skills; it's about amplifying them. It's a shift from being a lone developer to operating as a developer-plus-AI team. I can see how this capability could 5x an individual's impact this year, and potentially far more as we get comfortable with its use and the technology keeps improving daily.

My goal today is to show you a real, practical example of how this works.

The Proving Ground: Can an AI Tackle a Real Security Challenge?

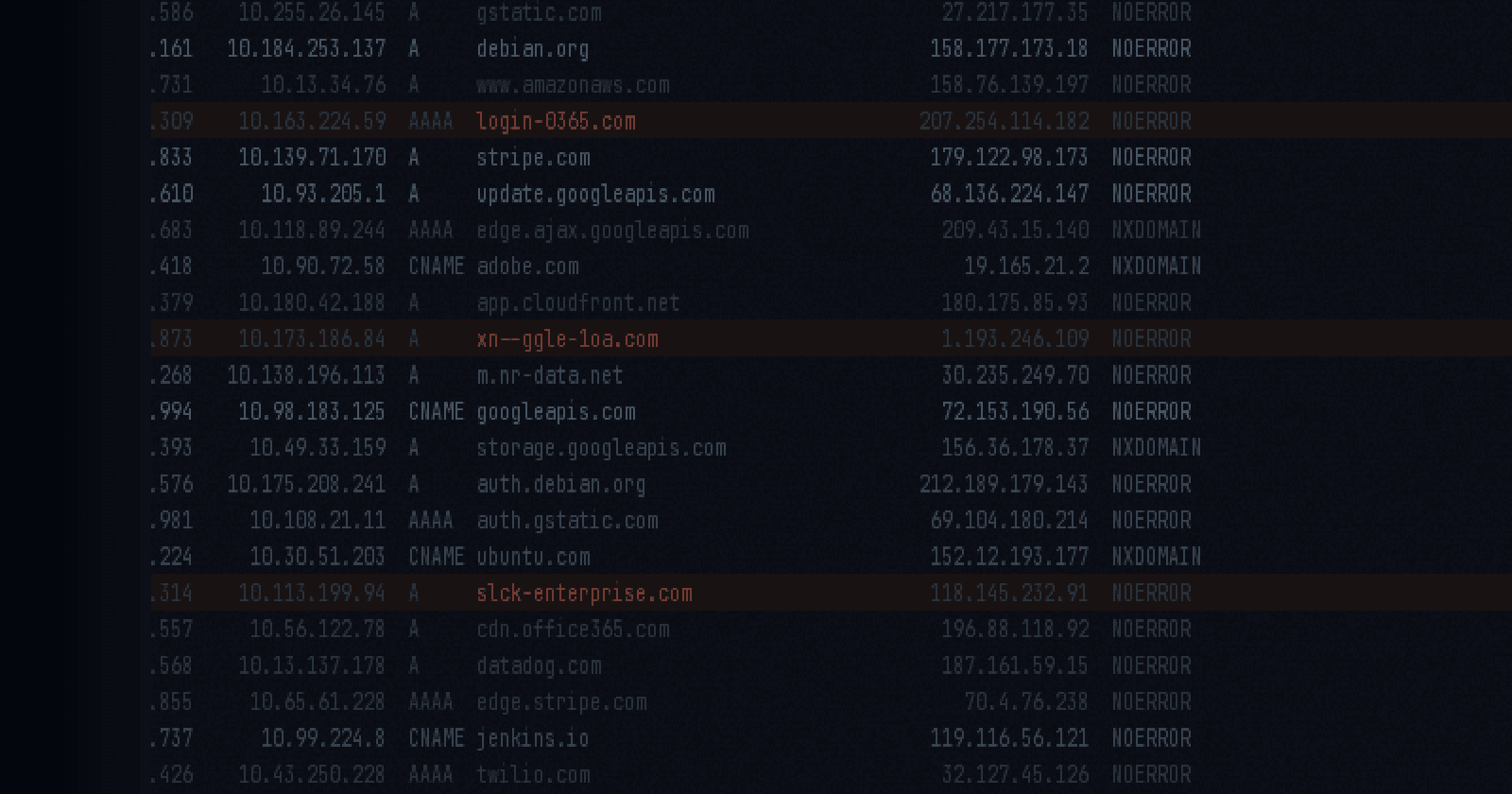

I wanted to test this with something that matters—not a toy problem or a tutorial exercise, but a genuine detection engineering challenge: finding anomalous DNS queries in a corporate environment.

If you work in security, you know this is a classic problem. It requires deep knowledge of your data, your systems, and attacker techniques. Normally, this is a multi-week project involving research, prototyping, testing, and tuning.

I happened to have a surprisingly open calendar one afternoon, so I decided to run an experiment.

Total time spent working with the AI: approximately 45 minutes.

Step 1: I Gave the AI a Job Title and an Onboarding Packet

This was the breakthrough that changed everything. Instead of just asking the AI to "write me a DNS detection query," I treated it like a new team member showing up on day one.

I gave it a persona: "You are a detection engineer working on the Infrastructure Security Monitoring team. We primarily use our internal tooling X, Y and Z to collect, enrich, search, build detections and investigate alerts."

Then I gave it context: I pointed it to internal documentation about our team, our platform, and our data structures. I told it to read up and add findings to its working memory.

The key takeaway? By providing a persona and access to our internal documentation, the AI instantly gained the context needed to operate as a team member, not just a code generator. It understood our naming conventions, our data schemas, and our operational constraints before writing a single line of code.

Step 2: I Asked for a Plan. It Gave Me a Full-Blown Strategy.

My prompt was deliberately vague: "I want to find anomalous DNS queries in corp. Plan?"

What came back wasn't just a plan—it was a comprehensive strategy. The AI first performed a gap analysis of our existing detections, identifying what we already covered (exfiltration/tunneling, behavioral anomalies) and where the gaps were (volume-based spikes, domain entropy, TLD anomalies). It wasn't completely accurate, but I suspect our documentation was a bit lagging; a project for another day.

Then it recommended four detection approaches: statistical baseline detection as a quick win, entropy and length-based detection for DGA identification, answer-based anomaly detection analyzing DNS response types and TTL values, and a composite scoring model combining multiple signals for higher-fidelity alerts.

It even laid out a 5-phase implementation architecture with weekly milestones—from prototyping through testing and validation.

I didn't ask for any of this structure. The persona and context I'd provided gave it enough understanding of how our team operates to propose something that actually looked like what an experienced detection engineer would put together.

Step 3: The Code—And the Inevitable Errors

I said, "Let's get started prototyping your specific recommendations. Show me the plan before executing."

The AI immediately started generating SQL—a volume spike detection query with window functions, rolling averages, the whole thing. It was impressive.

And it immediately produced a syntax error.

This is where most people get frustrated and dismiss AI as "not ready." But this is actually where the real collaboration begins.

I pasted the error back: "I'm getting '[UPM146] Expected CloseParen, found Identifier.'" The AI diagnosed the issue—invalid syntax on specific lines—and fixed it. Then I hit a column resolution error. Fixed again. Then a more serious problem: a memory exception because the query was collecting too many domains in aggregation.

This wasn't simple copy-paste anymore. We went from fixing syntax to diagnosing and solving system-level memory errors together. The AI understood the architectural constraints of the query engine and proposed removing heavy aggregations that were only needed for investigation context anyway.

Debugging became a conversation. Each error message I fed back made the next iteration better, not because the AI was perfect, but because it could reason about the problem in context.

The 'Aha!' Moment: The AI QA'd Its Own Work

Here's where it got really interesting. After the detection was running, I exported the results and fed them back: "Here are the detection results. Do the analysis."

The AI generated its own QA report card—and it was brutally honest. 83% false positive rate. Five out of six alerts were false positives.

But it didn't stop there. It identified three critical logic issues in its own code: the spike/stddev logic was using OR when it should have been AND (too permissive), the baseline window was too weak (should require a full 7 days of data, not just any data point), and dev environments weren't being excluded from the detection scope.

Projected impact after tuning: FP rate drops from 83% to approximately 3%.

This was the moment I truly understood the paradigm shift. The AI wasn't just generating code—it was analyzing results, identifying its own flaws, and proposing specific fixes with projected outcomes. That's not a tool. That's a teammate.

The Final Result

What would have been a multi-week project of research, coding, testing, and tuning was condensed into a single focused session. The output: production-ready detection logic with correct AND logic for spike and standard deviation thresholds, strict 7-day baseline requirements, exclusions for common false positive sources like guest networks and dev environments, and a query ready for production testing.

Not perfect out of the box—nothing ever is in detection engineering. But a dramatically accelerated starting point that would have taken significantly longer to reach through traditional methods.

My Five Principles for Effective AI Collaboration

After this experience (and many others since), here's what I've distilled as the keys to making AI work as a genuine engineering partner:

1. Don't tell AI what to do—have a discussion. My vague prompt "I want to explore new detection opportunities" led to a comprehensive strategy I hadn't fully mapped out myself. Open-ended prompts invite the AI to bring its own perspective.

2. Ask AI questions. AI brings a different lens. It identified gaps in our existing DNS detections that we hadn't prioritized. Let it challenge your assumptions.

3. Tell AI to ask you questions. The AI's clarifying questions—baseline window? scope? output format?—ensured the prototypes were built correctly from the start. This two-way dialogue front-loads the thinking that would otherwise surface as bugs later.

4. Go BIG. Don't be afraid to ask for a full detection suite. We went from a simple idea to three distinct, production-ready detection prototypes in one session. The worst that happens is you scale back.

5. Use personas. Telling the AI "You are a detection engineer..." was the key to unlocking relevant, context-aware responses. Without it, you get generic code. With it, you get code that understands your environment.

This Power Is Accessible to You, Right Now

If a long-time manager can do it, you can do it even better—because you know the data and systems inside out.

I highly recommend you give it a try. Seriously, it's easier than you think. Pick a detection you've been meaning to build, a script you've been procrastinating on, or an analysis you've been putting off. Give the AI a persona, give it context, and start a conversation.

You know the data, you know the systems—let's see what you can build.

What's your experience using AI in your security workflows? Have you found the "teammate" mode, or are you still in the "tool" phase? Drop a comment—I'd love to hear what's working (and what's not) for you.