AI-Driven Threats: How Attackers Are Using Artificial Intelligence in 2025

The cybersecurity landscape in 2025 is defined by a paradox: the same artificial intelligence (AI) tools designed to protect digital infrastructure are now being used by attackers to their advantage. From hyper-personalized phishing campaigns to self-evolving ransomware, AI has become the ultimate force multiplier for cybercriminals. This article breaks down how adversaries are leveraging AI in 2025, the real-world impacts on businesses and individuals, and actionable strategies to defend against these next-generation threats.

The New Era of AI-Powered Phishing and Vishing

Phishing emails riddled with typos and awkward phrasing are relics of the past. In 2025, attackers use generative AI platforms like ChatGPT and DeepSeek to create messages that are nearly indistinguishable from legitimate communications. These tools analyze publicly available data, such as LinkedIn profiles, social media posts, or corporate newsletters, to create context-aware lures. For example, a fake invoice might reference a recent project deadline or mimic a colleague's writing style.

Voice phishing (vishing) has also evolved. Attackers now deploy AI-generated voice clones to impersonate executives during phone or video calls. In one 2024 case, a Hong Kong finance worker transferred $25 million to fraudsters after a deepfake video call with what appeared to be the company’s CFO and colleagues. The deepfakes were trained on publicly available conference recordings, highlighting how even low-resolution video footage can be used for malicious purposes.

Why it works:

- Personalization: AI scrapes data to tailor messages to individual roles, industries, or even personal interests.

- Scale: Automated tools generate thousands of unique phishing emails per hour, bypassing traditional spam filters.

- Adaptability: If a campaign fails, machine learning algorithms analyze detection patterns and refine tactics for the next wave.

- Flawless grammar and professional tone: No typos, awkward phrasing, or broken English.

AI’s Improvement on Phishing Emails

Deepfake Social Engineering: Trust No One (Without Verification)

Deepfake technology has moved beyond viral memes to become a cornerstone of corporate fraud. In 2025, attackers use AI to create synthetic media that mimics the appearance of executives, government officials, or trusted partners. The $25 million success previously mentioned used footage from public webinars to replicate voices and mannerisms, convincing an employee to authorize fraudulent transfers.

Key tactics:

- CEO or CFO Fraud: Fake audio or video of a CEO demanding urgent wire transfers.

- Fake Technical Support: Deepfake agents posing as IT staff to gain remote access.

- Disinformation Campaigns: Fabricated videos of executives making inflammatory statements to manipulate stock prices.

Deepfake Process

Autonomous Malware and Ransomware 2.0

Malware is no longer static code. AI-driven variants, like Agentic Learning Malware, analyze their environment in real-time, adapting to evade detection. For instance, if a strain detects it’s running in a sandbox, it will delay malicious activity or mimic benign processes. This adaptability makes traditional signature-based antivirus tools nearly obsolete.

Ransomware has also embraced AI through Ransomware-as-a-Service (RaaS) platforms. These tools automatically identify high-value targets (e.g., hospitals, law firms) and adjust ransom demands based on the victim’s revenue or insurance coverage. The March 2025 Blue Yonder attack exemplifies this trend: AI-powered ransomware crippled supply chain software used by Starbucks and Morrisons, causing store closures and shipment delays.

Defense gap: Legacy systems struggle to keep pace with AI’s iterative learning. A 2025 Splashtop study found that organizations using AI-augmented security tools reduced breach response times by 96% compared to those relying on manual methods.

Static Malware Behavior vs. AI-Driven Malware: Key Differences

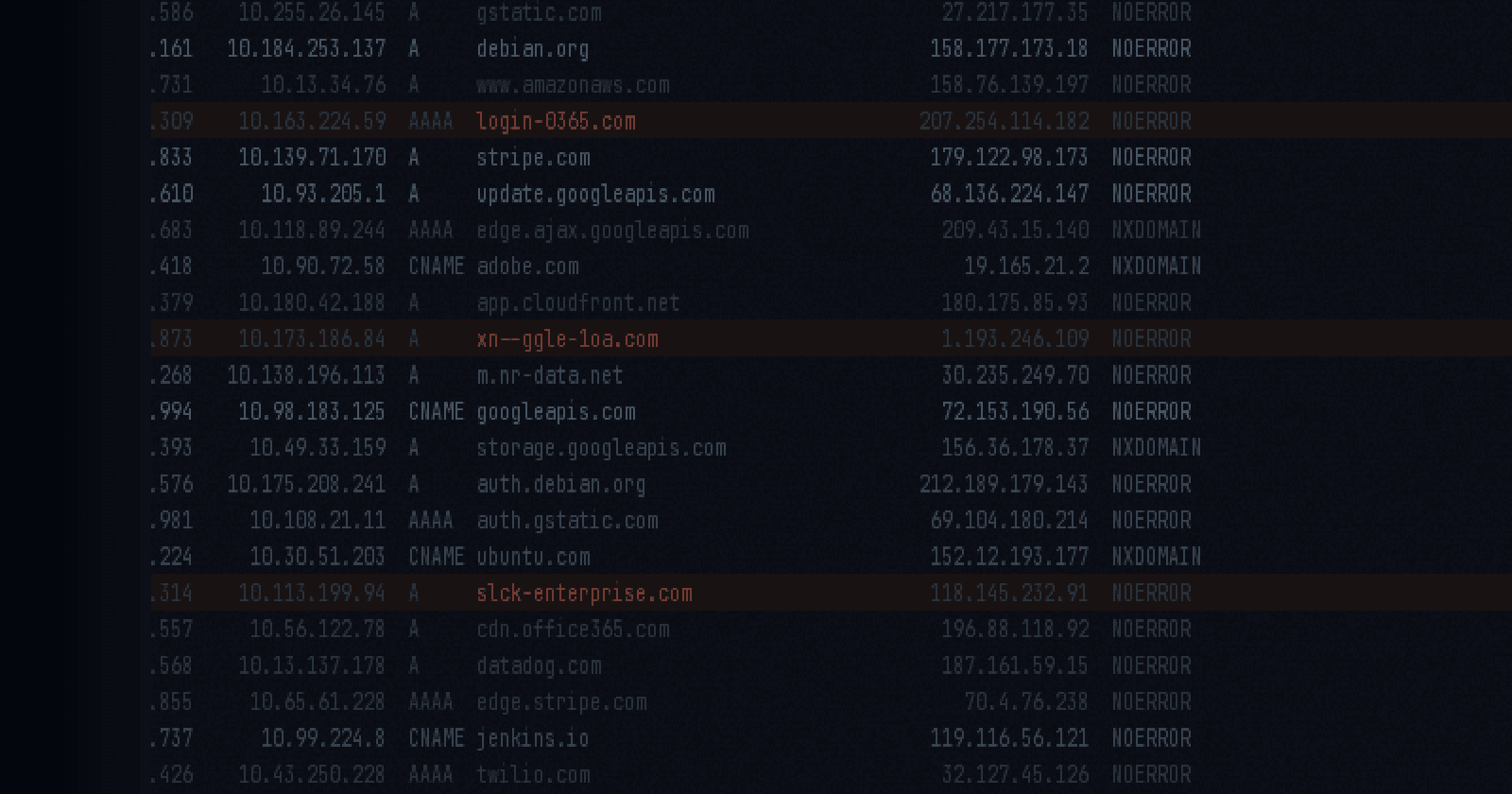

Credential Stuffing and AI-Powered Password Cracking

Weak passwords are easier than ever to exploit. AI models, such as PassGAN (Password Generative Adversarial Network), can crack 51% of common passwords in under a minute by analyzing patterns in leaked datasets. In 2025, credential-stuffing bots use these models to test billions of username and password combinations across sites, often succeeding within hours.

The numbers:

- 18-character numeric passwords: Cracked in <1 day.

- 10-character alphanumeric + symbols: Cracked in ~1 week.

Even multi-factor authentication (MFA) isn’t foolproof. Attackers bypass SMS-based codes using SIM-swapping or AI-driven social engineering.

Password Complexity vs. AI Cracking Time (2025 Benchmarks)

Defense Strategies: Fighting AI with AI

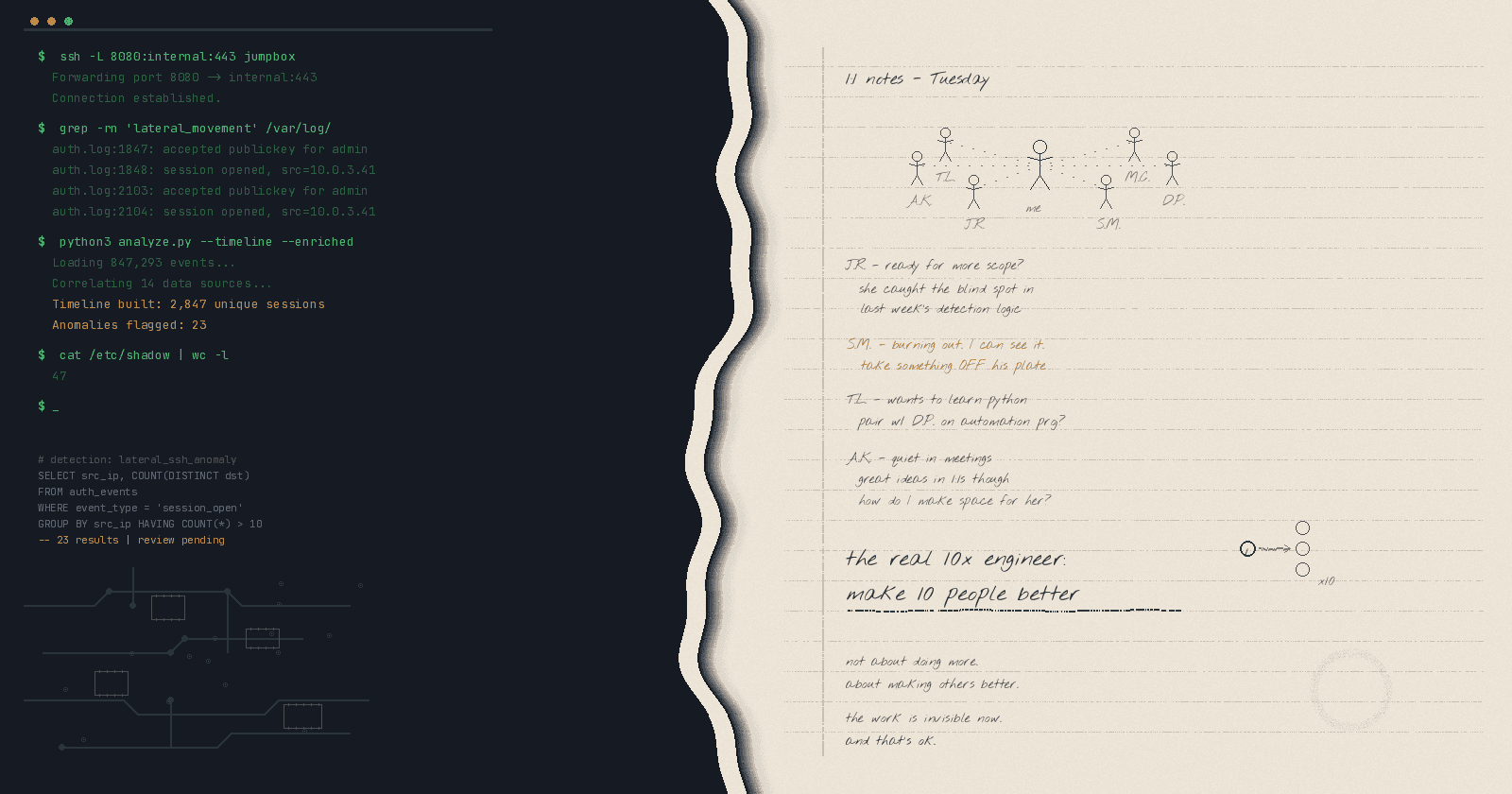

1. Deploy AI-Driven Threat Detection

Modern security tools use machine learning to establish network baselines and flag anomalies. For example, an AI might notice that a device accessing sensitive files at 3 a.m. is statistically unusual and automatically isolate it. Darktrace’s 2025 survey found that 74% of organizations using AI-augmented defenses successfully neutralized ransomware before it was successful.

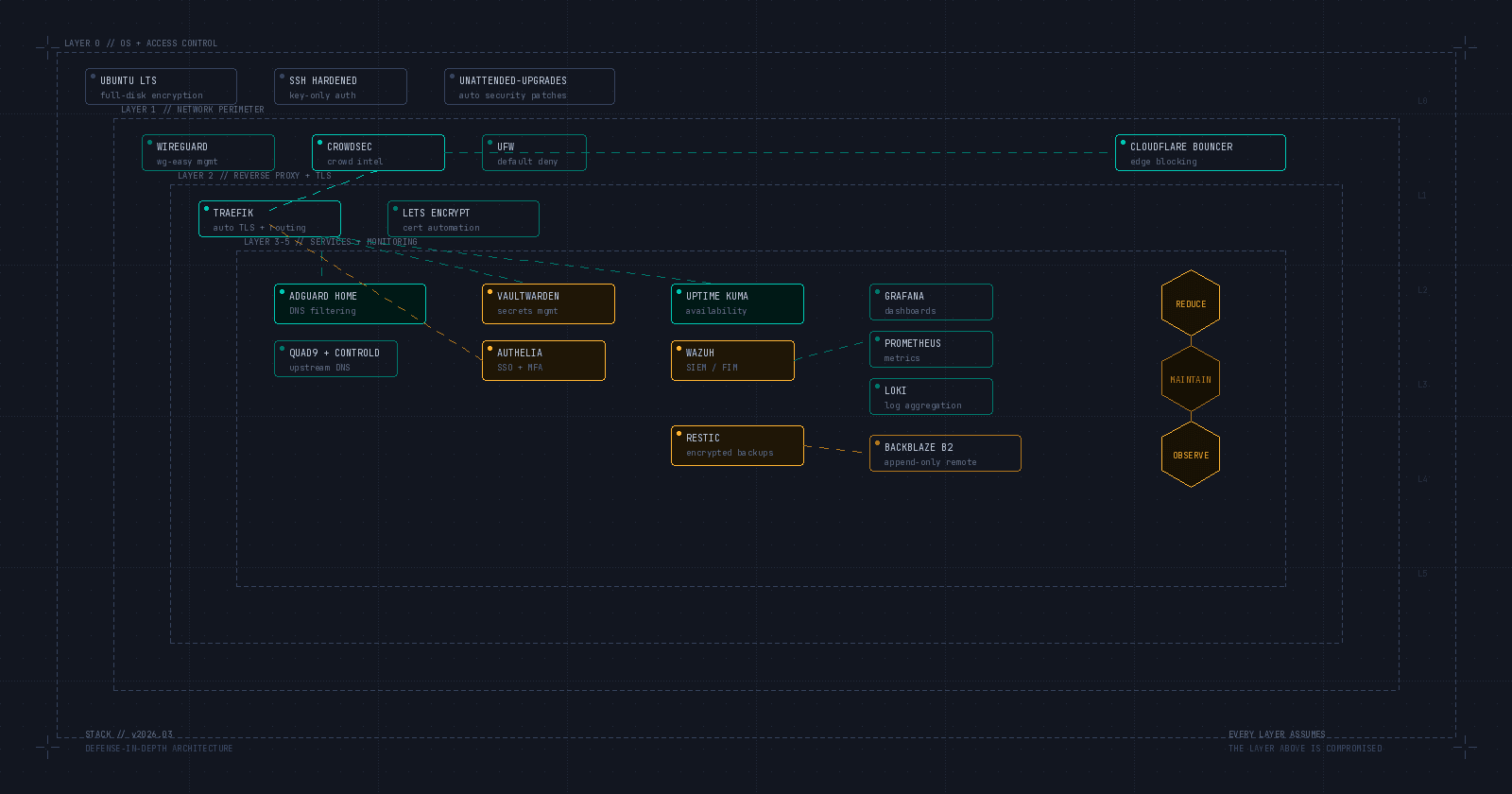

2. Adopt Zero-Trust Architecture

Assume every user and device is compromised. Zero-trust frameworks require continuous authentication, limiting access to only what’s necessary. After the Blue Yonder breach, companies like Sainsbury’s mitigated damage by segmenting supply chain systems from core networks.

3. Train Employees on AI-Specific Threats

Regular drills using AI-generated phishing simulations help teams recognize sophisticated lures. Focus on “emotional triggers” like urgency or authority, which are common themes in deepfake scams.

4. Upgrade to Phishing-Resistant MFA

Replace SMS codes with hardware tokens or biometric verification. Microsoft’s 2025 guidelines recommend FIDO2 security keys for high-risk accounts.

Conclusion: The Double-Edged Sword of AI

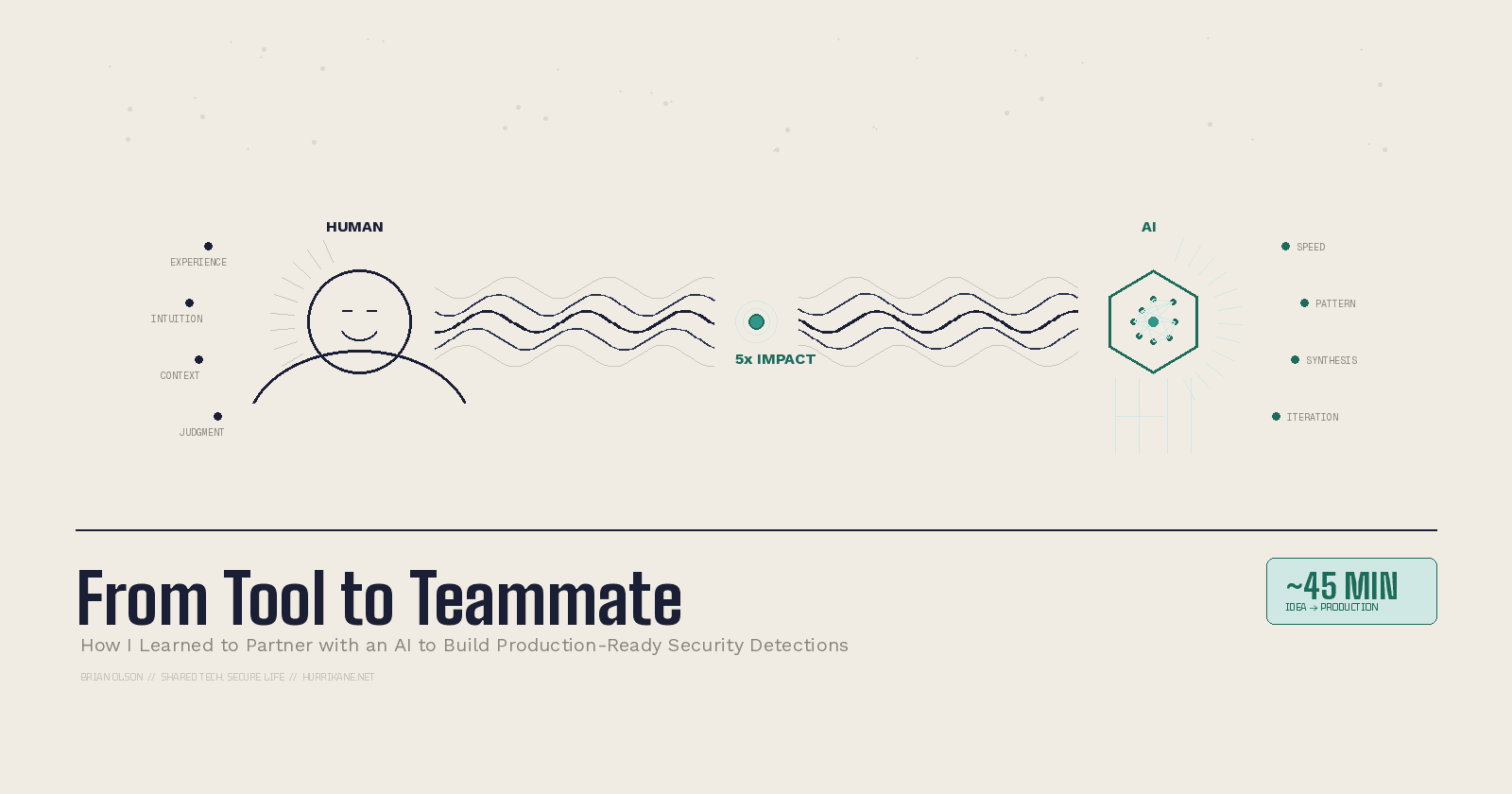

AI is both the problem and the solution in 2025’s cybersecurity arms race. While attackers exploit generative models for fraud and malware, defenders counter with adaptive tools that predict and neutralize threats. The lesson is clear: Organizations that integrate AI into their security strategies will survive, and those that don’t will become statistics.

Final recommendation: Audit your defenses for AI readiness. If your incident response plan doesn’t mention deepfakes or autonomous malware, it’s already outdated.